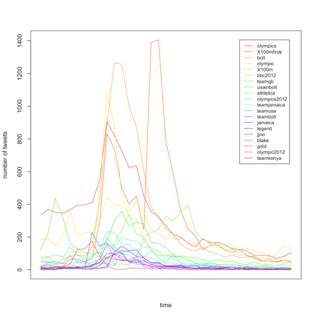

I am currently interested in the application of social network analysis to a whole host of interesting problems. In the TRIDEC project, we are looking at application of SNA to disaster data. One of my students, Maryam Fatemi is investigating the use of community detection algorithms and recommendation systems.

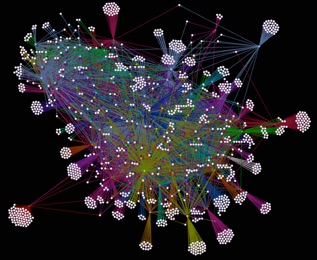

The image shown to the left depicts the co-present communities as detected by bluetooth of the Reality Mining dataset.